- Home

- Details

- Registry

- RSVP

- Wolf howling

- How to activate lightroom free trial

- Painter adobe patcher 2018

- True autumn coporate palette

- Pia vpn windows icons

- Setup office 365 in outlook 2016 mac

- Mongose fire ball

- Matilde moisant

- Spacenet satellite

- Linguist job description

- Wilt chamberlain hows the weather up there

- The solution to the solar neutrino problem was

- Roccat kone driver not working

- Best home computer backup software for windows 10

- Prince of egypt full movie online free 123movies

- Playsets for backyard

- Black people with blue eyes

- Screenflow free license key

- Yo soy betty la fea reparto

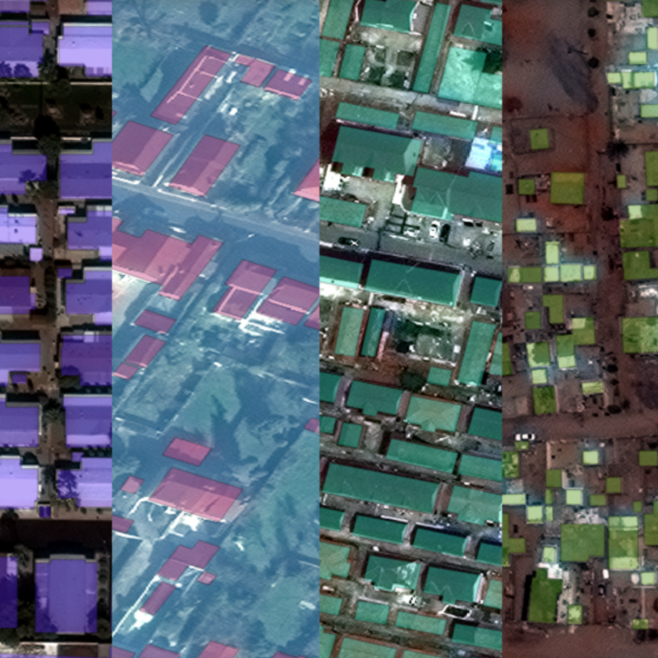

Standard image augmentations - shift, crop, contrast, etc - I did not try enough experiments with this Īlso compared to other competitors in this challenge, our models converged 2–3x slower, probably due to non-ideal augmentations or LR regime.Input with 4 image channels instead of 3 - metrics had gone up a lot.Ofc MOAR layers gave some additional points.Attention From my personal experience so far attention doesn’t make something worse, so here it helped too.A whole bunch of various encoders - SeNets were the most interesting ones.Polygons are ground truth Some other tricks have been tried Model and data processing Nadir=25 and nadir=34 with different azimuths. Postprocessing with borders mask and watershed.UResNeXt101(UNet + ResNeXt101) with transfer learning.The core of the task - identify all building footprints. The domain - satellite images of Atlanta suburb taken from different look angles( nadirs) separated into three groups: Nadir, Off Nadir, Very Off Nadir. In case you missed last year posts about participation in similar challenges: Spacenet three: Road detector and Crowd AI Mapping challenge Mistakes are easy to make anywhere and how fast you find and fix them defines your chances to win. Maybe the most important thing I have taught during this competition: Leaderboard is the only one truth. Honestly, many things went wrong, but I’ve got an enjoyable and useful experience and managed to improve my skills. For me, Spacenet4 became the first serious DL competition.

- Home

- Details

- Registry

- RSVP

- Wolf howling

- How to activate lightroom free trial

- Painter adobe patcher 2018

- True autumn coporate palette

- Pia vpn windows icons

- Setup office 365 in outlook 2016 mac

- Mongose fire ball

- Matilde moisant

- Spacenet satellite

- Linguist job description

- Wilt chamberlain hows the weather up there

- The solution to the solar neutrino problem was

- Roccat kone driver not working

- Best home computer backup software for windows 10

- Prince of egypt full movie online free 123movies

- Playsets for backyard

- Black people with blue eyes

- Screenflow free license key

- Yo soy betty la fea reparto